New technology helps robotic cameras act like real camera operators and follow action with a human feel…Some day, we will be able to have computerized cameras and editing software completely produce a quality program…especially in this “good enough” era of professional TV production.

From Science 2.0: http://www.science20.com/news_articles/this_robot_learns_your_every_basketball_move-152044

Automated cameras make it possible to broadcast sporting events but the choices lack the creativity of a human camera operator or director. The camera just goes back and forth following the ball.

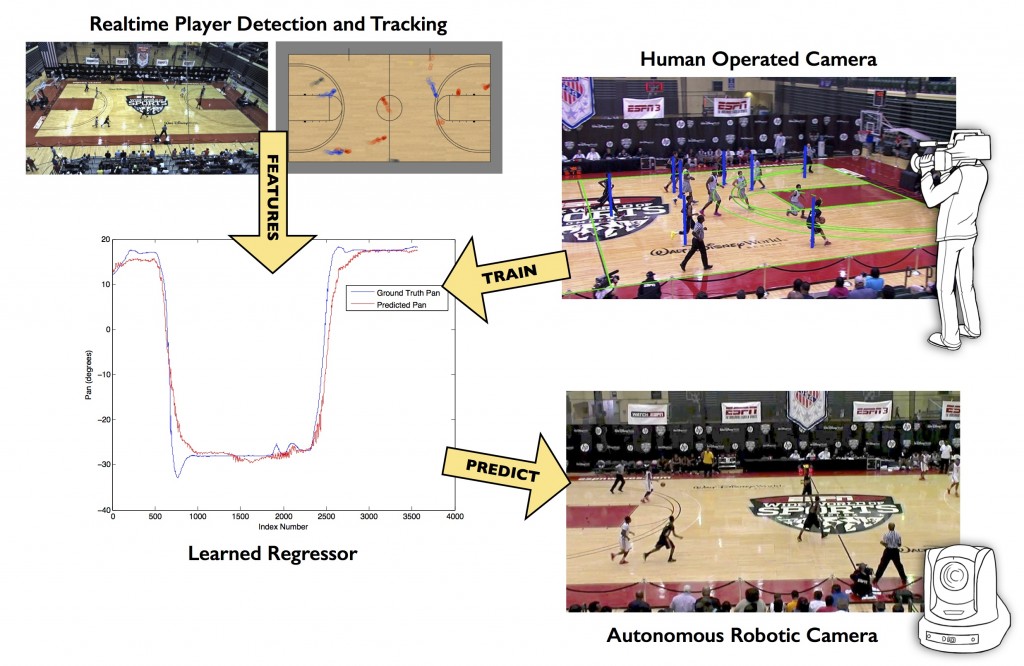

Disney Research engineers have now made it possible for robotic cameras to learn from human operators how to better frame shots of a basketball game. Instead of tracking a key object, as legacy systems do, the new work is designed to mimic a human camera operator who can

anticipate action and can adjust the camera’s pan, tilt and zoom controls to allow more space, or “lead room,” in the direction that the action is moving. That is why human-controlled video imagery that is smooth and aesthetically pleasing while the automated kind has always looked robotic.

Peter Carr, a Disney Research engineer, and Jianhui Chen, an intern and a Ph.D. student in computer science at the University of British Columbia, devised a data-driven approach that allows a camera system to monitor an expert camera operator during a basketball game. The automated system uses machine learning algorithms to recognize the relationship between player locations and corresponding camera configurations.

Credit: Disney Research

“We don’t use any direct information about the ball’s location because tracking the ball with a single camera is difficult,” Carr said. “But players are coached to be in the right place at the right time, so their formations usually give strong clues about the ball’s location.”

Carr and Chen demonstrated their system on a high school basketball game. They used two cameras – a broadcast camera that was operated by a human expert and another that was a stationary camera that the computer used to detect and track the players automatically.

“Because the main broadcast camera in basketball maintains a wide shot of the court, we focused on predicting the appropriate pan angle of the camera,” Carr said. Following supervised learning based on the operator’s actions, the system was able to predict how to pan the camera in a way that was superior to the best previous algorithm and that did indeed mimic a human operator.

Carr said he expects the method can be adapted to other sports, possibly with additional features. Future work also will include mimicking the auxiliary cameras used for cutaway shots in multi-camera productions.

Presented at WACV 2015, the IEEE Winter Conference on Applications of Computer Vision in Waikoloa Beach, Hawaii.